AI-Native Autonomy Architecture for multi-vendor robot dogs — connecting any quadruped across all venues through real-time Fast LLM perception, Strategy LLM reasoning, and autonomous fleet coordination from edge to cloud. The robot learns, adapts, and decides on its own.

System Architecture

AI-Native Autonomy Stack

PhysicalAI operates as the AI-native autonomy architecture above all robot hardware — from protocol-agnostic device integration, through on-robot reactive intelligence with direct motor control, to scheduled strategic reasoning that writes memory and pushes new policies across your entire fleet.

REASONING LAYER

Three Engines. One Brain.

Three reasoning systems working at different speeds — from millisecond reflexes to fleet-wide strategy. Each layer perceives, reasons, and acts autonomously.

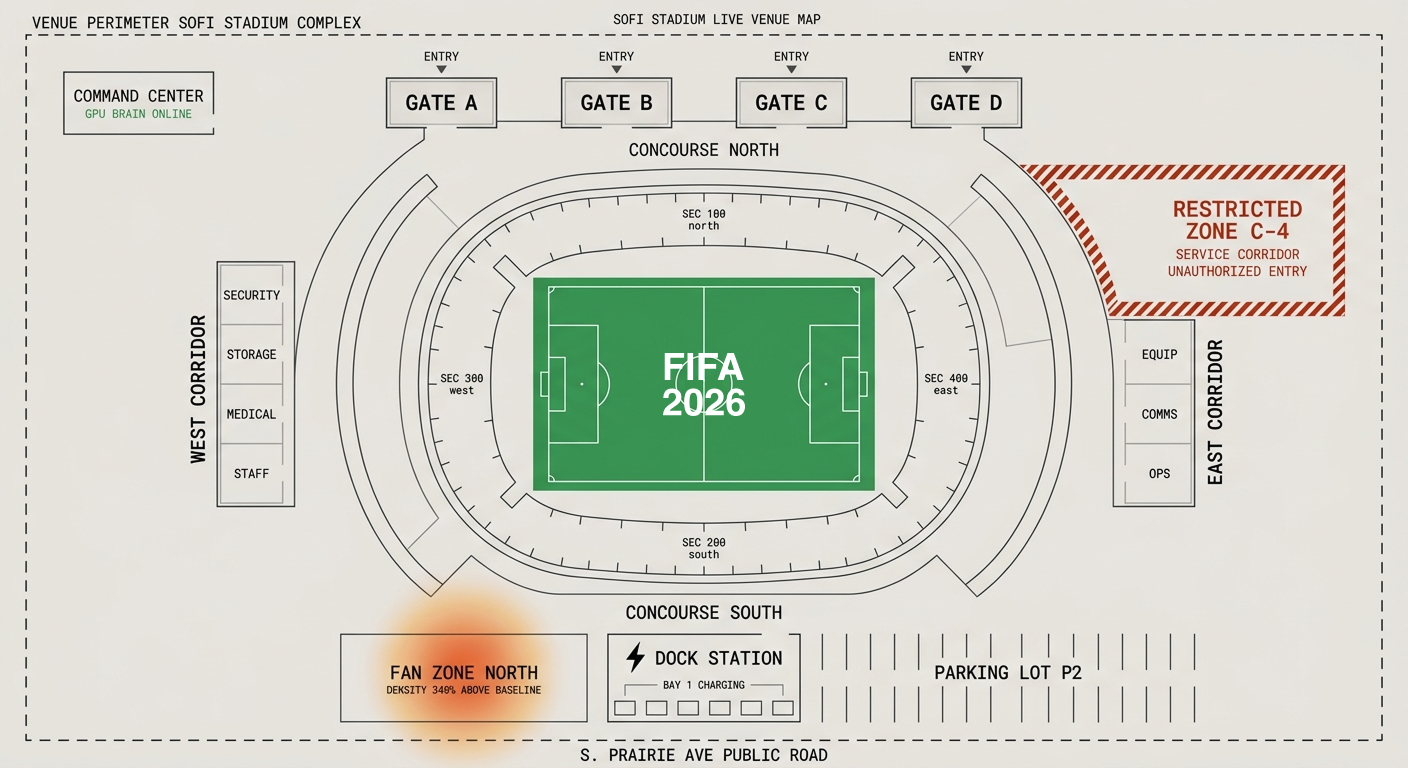

Command Center

Real-Time Fleet Operations

Live monitoring of all robot units across venue zones — every Fast LLM instance streaming state, every Strategy LLM decision logged, fleet-wide position, battery, and mission status in real time.

Autonomous Response Pipeline

FAST LLM · ACT · ESCALATE · STRATEGY LLM

SoFi Stadium

Phase 1 — Active

Main venue. 70,240 capacity. Full fleet of 20 units. Primary command center GPU cluster deployed.

LAX Airport

Phase 1 — Active

International + domestic terminals. 8 robot units. Integrated with TSA coordination protocols.

Rose Bowl

Phase 2 — Planned

Pasadena venue. 88,565 capacity. Edge mission node deployed. Fleet onboarding Q1 2026.

Dignity Health

Phase 2 — Planned

Carson venue. Integration with existing arena security infrastructure. 12 units allocated.

Long Beach Airport

Phase 2 — Planned

Secondary fan arrival hub. 6 robot units. Fan zone and ground transport monitoring.

Fan Zones × 5

Phase 2 — Planned

Downtown LA, Hollywood, Santa Monica, Inglewood, Anaheim. Mobile observation units per zone.

Warehouses

Phase 5 — Future

Post-event platform expansion. Industrial patrol, hazard detection, inventory anomaly workflows.

Smart City

Phase 5 — Future

UAV coordination, wheeled robots, fixed camera fusion. The universal Physical AI Brain for any hardware.

Live Telemetry

Fast LLM Log Stream

Real-time streaming logs from every Fast LLM instance across all robots. Every perception cycle, every motor command, every state change, every Strategy LLM escalation — logged to cloud, queryable in real-time.